Text to Speech API: A Creator's Guide to AI Voices

You’ve written the script. You’ve opened your recording app. You’ve done three takes of the first paragraph and already hate all of them.

Maybe your room sounds echoey. Maybe your voice gets flat after the second sentence. Maybe you’re building YouTube videos, course lessons, or podcast segments regularly, and recording every line has become the slowest part of your process. Hiring a voice actor can solve that, but for many creators it adds scheduling, revision rounds, and extra cost.

That’s why so many creators have shifted to text to speech api tools. A modern TTS setup lets you take a script and turn it into polished spoken audio without booking studio time or wrestling with your mic settings. This isn't just a developer feature anymore. It has become a practical production tool for people who make content every week.

That demand is showing up across the market. The Global Text to Speech API Market was valued at USD 2.2 billion in 2023, driven by voice-enabled apps, AI improvements, and the need for accessible content across platforms like YouTube and e-learning, according to Ken Research's text to speech API market report.

Introduction The End of Bad Voiceovers

A lot of creators hit the same wall. The script is good, the visuals are almost done, but the voiceover becomes the bottleneck.

A YouTuber making documentary-style videos might spend more time fixing breaths, misreads, and volume jumps than editing the story itself. A course creator might avoid updating lessons because re-recording old modules feels like starting from scratch. A podcaster might want clean intros, sponsored segments, or alternate versions, but not another late-night recording session.

That’s where modern TTS changes the equation. Instead of thinking of it as a robotic reader, it helps to think of it as a voice production layer. You write your words, choose the kind of performance you want, and generate audio that fits the project.

Why creators care now

Older computer voices sounded stiff because they treated speech like a pronunciation exercise. Newer systems treat it more like performance. They can handle pacing, sentence rhythm, and in many cases much more nuanced delivery than people expect.

For a creator, that means TTS isn't only for accessibility menus or phone systems anymore. It can be used for:

- YouTube narration: Explainers, list videos, commentary, tutorials

- Course lessons: Clean, repeatable narration across a full curriculum

- Short-form video: Fast voiceovers for reels, shorts, and social content

- Audio repurposing: Turning written content into listenable versions

Practical rule: If voice recording keeps delaying publishing, the problem probably isn’t your script. It’s your production workflow.

The biggest shift is psychological. You no longer need to be “good at voiceover” to publish polished spoken content. You need a clear script and a tool that gives you enough control over the final delivery.

What Is a Text to Speech API Really

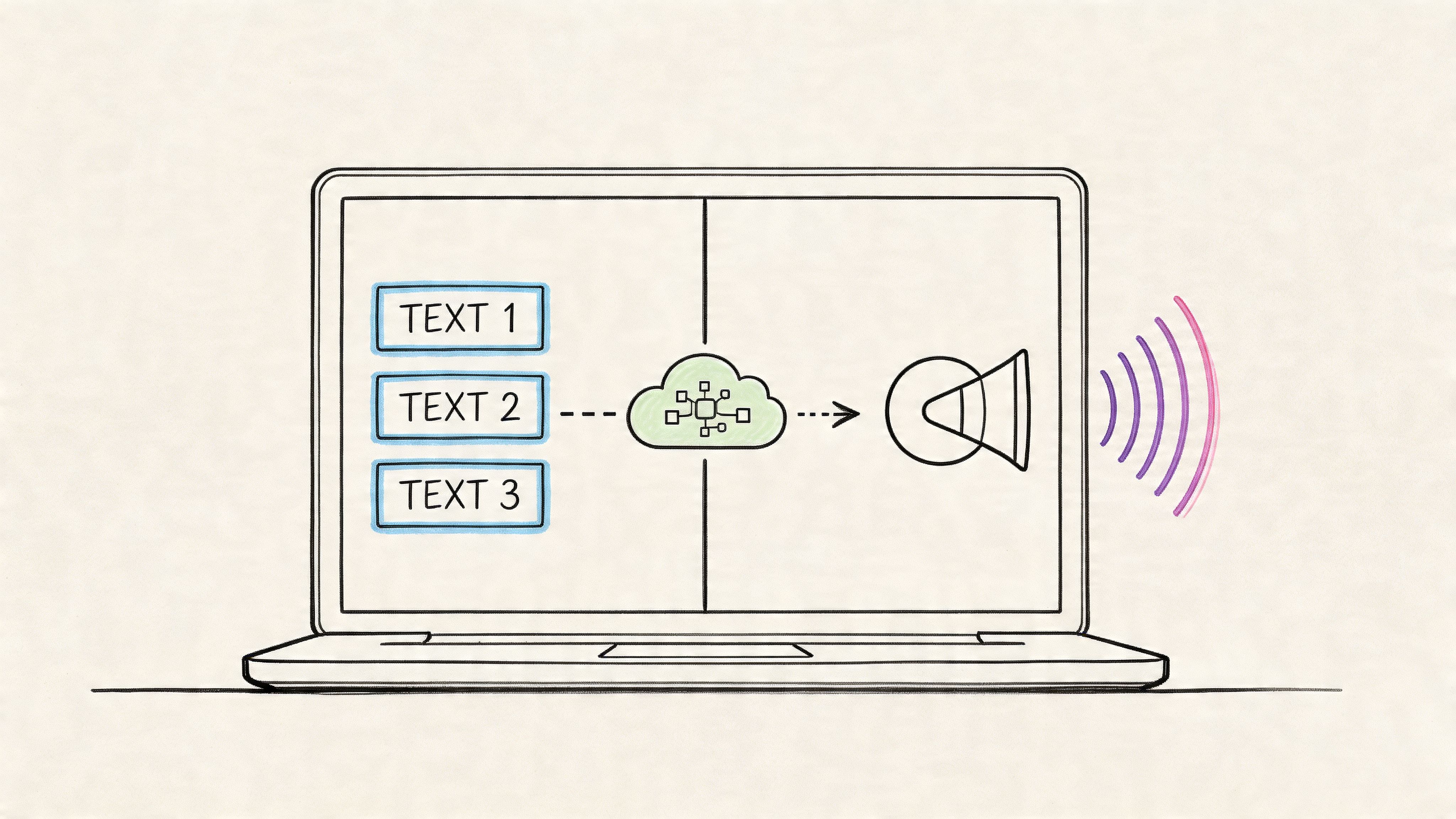

The term sounds technical, but the basic idea is simple.

A text to speech api is a service that takes your written text, sends it to a speech engine, and returns audio. If “API” feels abstract, use this analogy: it’s like a waiter in a restaurant. You tell the waiter what you want, the waiter passes it to the kitchen, and the finished order comes back to you. You don’t need to walk into the kitchen yourself.

For creators, that matters because many online tools you already use work the same way. If you’ve ever wondered how platforms connect posting tools to social networks, this explainer on MicroPoster on social media APIs gives a helpful non-developer view of the same idea.

The simple mental model

You can break a TTS API into three parts:

Your script

This is the text you want spoken. It could be a video narration, course lesson, podcast intro, or product explainer.The speech engine

This is the AI system that decides how the words should sound. It handles pronunciation, rhythm, pauses, and voice style.The audio output

You get a playable result such as an audio file or a stream you can preview right away.

That’s the whole loop. Text goes in. Speech comes out.

Why it doesn’t sound like old-school computer speech

A lot of people still hear “text to speech” and picture a monotone GPS voice from years ago. That’s outdated.

Modern systems can sound much more natural because they model speech more like a human performance. They don’t just read words one by one. They shape the line. They decide where a pause should land, how a phrase rises or falls, and how quickly a sentence should move.

If you want a broader look at how current voice tools differ from older generators, this guide to text to speech voice generator tools is useful background.

Think of the API as the delivery route, not the voice itself. The quality you hear depends on the engine behind it and the controls you get on top.

That distinction helps when you compare tools later. Some services are powerful but code-heavy. Others package that power into something a creator can use without touching documentation.

How Modern TTS APIs Actually Work

Under the hood, modern TTS feels a lot like producing music from sheet notes.

A script doesn’t become audio in one magical jump. The system goes through a few layers: it interprets the text, predicts how the speech should flow, and then generates the actual sound wave you hear.

First the system cleans the script

Before a voice can “perform” your words, the model has to understand them.

This step is often where abbreviations, punctuation, numbers, and odd formatting get sorted out. If your script says “Dr.” or includes dates, brand names, or bullet fragments, the system tries to decide how those should be spoken aloud. You can think of this as a proofreader preparing a script for a narrator.

This matters more than many creators realize. If the text is messy, the voice output often sounds messy too.

Then it plans the performance

Once the words are understood, the engine predicts how the speech should sound over time. This is the part that feels like composition.

The model decides things like:

- Rhythm: Which phrases should move quickly and which need breathing room

- Pitch movement: Where the sentence rises, drops, or emphasizes a word

- Timing: How long pauses should feel between ideas

- Stress: Which words deserve extra focus

If that sounds abstract, compare it to giving an actor a script and some direction. The words stay the same, but the delivery changes everything.

Finally it creates the audio

The last stage turns that speech plan into the final waveform. This is the audio file or stream you listen to.

Some tools do this fast enough that playback can begin before the full clip is finished generating. According to OpenAI’s text to speech documentation, leading services support realtime audio streaming via chunked transfer encoding and offer formats like MP3 for general use, Opus for low-latency streaming, and AAC optimized for YouTube and iOS.

That’s useful in practical creator workflows. You can preview faster, test short revisions, and fit the export to the platform where the audio will live.

If you're comparing how different voices feel in finished projects, this roundup of realistic text to speech voices gives helpful listening criteria.

A strong TTS result usually comes from two things working together: a clean script and a model that handles timing well.

Creators often assume bad output means “AI voices still sound fake.” Sometimes the problem is simpler. The text needed better punctuation, clearer sentence breaks, or more deliberate phrasing.

Key Features That Give You Creative Control

The difference between a basic voice generator and a useful creator tool comes down to control.

If a platform only lets you paste text and click play, you’ll get audio. But you won’t get much authorship. Good TTS tools give you the same kind of choices a director gives a narrator: who is speaking, how fast they move, where they pause, and what emotional texture the line should carry.

Voice choice is casting

Voice selection isn’t a cosmetic feature. It’s casting.

A business tutorial needs a different sound than a horror story channel. A calm course lesson needs a different delivery than a fast social video. The “right” voice is the one that supports the format and audience, not the one that sounds most dramatic in a demo.

When evaluating voice libraries, listen for:

- Clarity: Can people follow the words easily on first listen?

- Fit: Does the tone match your content category?

- Consistency: Does the voice stay stable across longer scripts?

- Accent coverage: Can you match the audience you’re speaking to?

Pacing and pauses shape meaning

A creator usually notices this after the first few projects. A script can be technically correct and still sound wrong because the pacing is off.

Speed, pitch, and pause controls let you make narration feel more deliberate. A slower read can add authority. Shorter pauses can make a listicle feel punchier. A slight pitch adjustment can soften a voice that sounds too stern for educational content.

The easiest way to improve AI narration is often not changing the voice. It’s changing the timing.

Here’s a simple example. If your script says, “Today we’re covering three editing mistakes beginners make,” the delivery can sound either rushed and disposable or clear and teacher-like depending on the pause after “covering” and the emphasis on “three.”

Pronunciation is not a small feature

Brand names, product terms, surnames, and niche jargon often expose weak tools quickly.

If you make software tutorials, finance explainers, or educational content, you’ll eventually need pronunciation control. Without it, the voice may stumble over terms your audience expects you to know. Good pronunciation editing helps protect credibility.

Later in the creative process, richer direction becomes important too.

Emotion is moving beyond manual tags

A major shift in recent TTS tools is that emotion no longer depends only on rigid markup. According to Speechmatics’ overview of top TTS APIs, advanced services are moving beyond simple SSML tags for emotion. Platforms like Hume use an LLM to interpret prompts such as “sound sarcastic” or “whisper fearfully” and adjust tone, rhythm, and cadence automatically.

That matters for creators because it feels more like direction than coding.

Instead of manually fine-tuning every phrase, you can think in performance language:

- For commentary videos: “Sound confident but conversational”

- For story channels: “Build suspense gradually”

- For course narration: “Speak clearly and patiently”

- For short-form hooks: “Open with more urgency”

Voice cloning changes repeatability

Voice cloning deserves careful use, but it’s powerful for creators who want continuity.

If you need a recognizable identity across many projects, cloned voices can help you keep a stable sound without re-recording every update. It’s especially useful when you need revisions, alternate versions, or scalable production across formats.

The creative takeaway is simple. A modern TTS platform shouldn’t just read text. It should help you direct a performance.

The Benefits for Your Content Creation Workflow

Most creators don’t adopt TTS because they’re fascinated by APIs. They adopt it because they want to publish more consistently without burning time on recording.

The workflow gains are hard to ignore. According to Fortune Business Insights on speech technology growth, the broader speech technology market is projected to grow at a 20.66% CAGR, and for podcasters and YouTubers, AI voices can cut production time by up to 80% and costs by 90% compared to traditional voice talent.

What that changes day to day

For a solo creator, that can mean:

- Faster revisions: Rewrite one paragraph and regenerate that section instead of re-recording an entire take

- Lower production friction: No mic setup, retakes, or cleanup every time you need narration

- More output: Turn one script into a YouTube version, a short-form cut, and an audio-only version

- Better consistency: Use the same voice style across a full series or course library

Those gains stack up over time. The less energy you spend on getting “a usable recording,” the more energy you can put into writing, editing, and publishing.

The less obvious advantage is scalability

A lot of creators first use TTS as a shortcut. Then they realize it also helps them scale.

If you create educational or evergreen content, you can update modules quickly. If you run multiple channels or formats, you can keep tone more consistent. If you want to experiment with multilingual or platform-specific variants, TTS makes that much less intimidating.

For a broader look at how creators combine automation across their stack, Vidito's AI tools guide is a useful companion read.

Workflow check: If publishing one narrated asset requires a recording session every time, your process won’t scale cleanly.

That’s the business case. TTS doesn’t just save effort on one project. It removes a recurring production choke point.

Common Use Cases in Modern Content

The easiest way to understand a text to speech api is to look at where creators use it.

The technology shows up in very different formats, but the pattern is the same. Someone has a script, needs usable spoken audio quickly, and wants more control than a default robotic reader can offer.

YouTube narration

Faceless channels are the clearest example.

A history creator can write a documentary-style script, pair it with archival visuals, and generate narration that sounds measured and clean. A finance channel can produce explainers with stable delivery across every episode. A list-based entertainment channel can create voiceovers quickly enough to maintain a frequent posting schedule.

If you're exploring that model, this guide on how people monetize faceless YouTube channels gives useful context around the broader workflow.

Online courses and training

Course creators often need consistency more than drama.

If you’re narrating ten lessons across one training program, your students shouldn’t feel like the instructor changed mics, energy level, or speaking style every few videos. TTS helps keep that delivery steadier. It also makes updates easier when one slide, one rule, or one product detail changes.

Podcasts and audio segments

Not every podcast wants full AI narration, but many can use TTS strategically.

Creators use it for intros, outros, transitions, recap segments, sponsor reads, or companion audio versions of written content. It’s especially helpful when you need a clean pickup line and don’t want to re-open your whole recording setup for fifteen seconds of audio.

Short-form video and social content

Short-form creators work on tighter timelines.

If you make TikToks, Reels, or Shorts, speed matters. You may want to test multiple hooks, alternate versions, or a different tone for a trend-driven post. TTS lets you draft faster, swap lines quickly, and keep momentum without waiting for a recording block.

Audiobooks and script testing

Authors and storytellers also use TTS before they commit to final narration choices.

A synthetic voice can help you hear pacing problems, awkward dialogue, or repetitive sentence structure. Even when it isn’t the final published version, it’s a practical way to audition the script with sound.

Some creators use TTS as the final voice. Others use it as a fast listening draft. Both are valid.

The common thread is efficiency with control. You’re not just converting text into sound. You’re turning written work into a format people can consume more easily.

How to Choose the Right Text to Speech Service

Choosing a TTS tool gets confusing because many services look similar in a feature list. Key differences become apparent when you try to make actual content with them.

The first filter should be voice quality. If the output sounds stiff, nothing else matters. Quality can be measured in structured listening tests. For example, Inworld AI’s TTS benchmark overview notes that its model achieved an ELO score of 1,236 based on thousands of blind user preference tests, with latency under 250ms, which helped reduce robotic artifacts and improve naturalness.

The criteria that matter most

When you compare services, focus on these questions:

- Does the voice sound natural for your kind of content? A customer service voice and a YouTube narration voice are not the same thing.

- Can you control delivery? Look for pitch, pace, pauses, pronunciation, and expressive prompting.

- How many languages and accents are available? This matters if you publish internationally or want audience-specific versions.

- How easy is the workflow? A powerful engine is less useful if every small change requires code or technical setup.

- Can the tool handle your real script lengths and revisions? Demo clips can hide workflow friction.

Raw API versus creator application

Some people really do need a raw API. If you’re building software, that makes sense. But many creators don’t want to send requests, manage formats, or wire outputs into another system. They want to paste a script, direct the read, and export the file.

| Factor | Raw API (e.g., Google TTS) | Creator Application (e.g., Lazybird) |

|---|---|---|

| Setup | Usually requires developer work | Usually starts with a visual interface |

| Control access | Powerful, but often technical | Creator-friendly controls are easier to find |

| Workflow speed | Good for products and automation | Good for day-to-day media production |

| Best for | Apps, platforms, custom integrations | YouTubers, podcasters, educators, marketers |

| Revision process | Often handled in code | Often handled directly in the editor |

Three provider categories

Big tech APIs are often strong on infrastructure. They’re useful when a team wants to build TTS into software products.

Specialist AI platforms often push quality and expressive features further. They can be a strong fit for teams comfortable evaluating models and workflows in detail.

Creator-focused applications make the most sense when your main goal is producing voiceovers without managing technical plumbing. If you want an example of that category, this overview of AI voice generator options includes tools built for content workflows rather than developer implementation. One creator-oriented option is Lazybird, which offers a browser-based workflow with over 200 voices, support for 100+ languages and accents, controls for pitch, speed, pauses, pronunciation, AI voice cloning, and access to stock media in the same platform.

Choose the tool that matches your bottleneck. If your problem is shipping content, a creator workflow matters more than raw technical flexibility.

That single decision saves a lot of frustration.

Get Started Instantly with a Creator-First Platform

A raw text to speech api can be powerful, but power and usability aren’t the same thing.

If you’re a developer building an app, direct API access may be exactly what you need. If you’re a creator trying to finish a YouTube video, course module, or podcast segment today, the more practical path is usually a tool that puts those voice models behind a clean interface.

That’s what makes creator-first platforms appealing. You can focus on script quality, voice selection, pacing, and export. You don’t need to think about request formatting, streaming behavior, or audio handling logic. You just need the final narration to sound right.

The best fit is usually the one that gives you enough depth without forcing technical overhead. For creators, that often means looking for a platform with a broad voice library, language coverage, performance controls, and a workflow built around production rather than software integration.

A practical creator workflow should let you do four things smoothly:

- Pick a fitting voice for your channel, course, or brand

- Adjust the delivery with pitch, speed, pauses, tone, and pronunciation

- Reuse a consistent identity with voice cloning when appropriate

- Build faster by keeping media assets and voice production close together

If that sounds closer to how you work than managing a raw API, you’re already looking in the right category.

If you want to turn scripts into polished voiceovers without wrestling with developer tools, try Lazybird. It’s built for creators who need realistic AI voices, control over delivery, voice cloning, and a simple workflow for producing content fast.